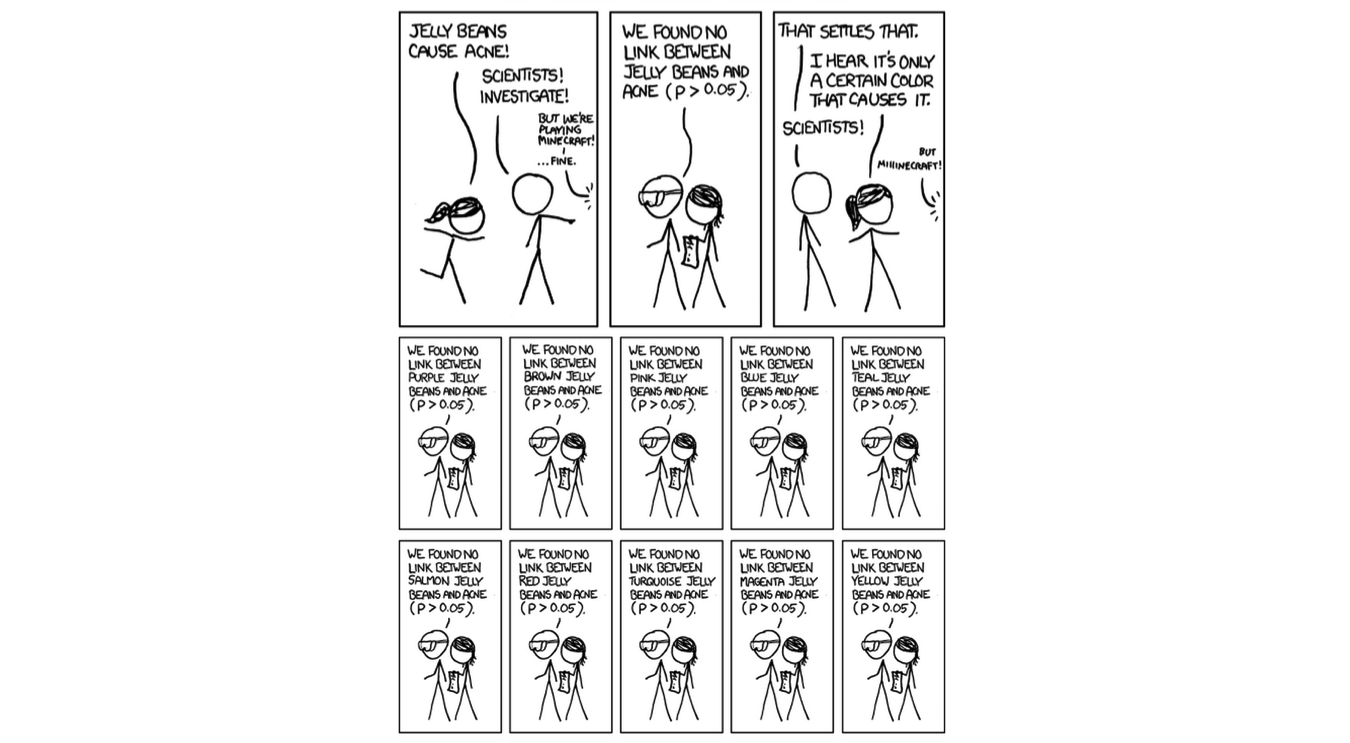

P-hacking is the exercise of fishing through data looking for correlations that have a p-value (below your threshold) and reporting this correlation as statistically significant (without assessing causality). However, in recent times statisticians have made note that many studies that site “highly reliable” p-values (usually a p<0.05) have been subject to a dubious activity of “p-hacking” or data dredging. Now when we observe a study (medical trial, crime statistics, voting stats), we should always look out for the p-value.

Essentially here, it would be related to size of sample relative to population and the size of the trend (percentage break-down of votes). Where a small set of observations are used to determine/infer how a population voted, the p-value indicates how significant a conclusion may be.

You may have seen this in election poll surveys The challenge comes into play when the observations from this sample are extrapolated across the entire population. Leaving statistical jargon aside, we use the p-value to understand how confident we should be when observing a sample. To understand p-hacking, let’s look first at the p-value? Today I’d like to extend this conversation into a topical matter: p-hacking, also known as data fishing. It is part of a blog series on cognitive biases and logical fallacies that data analysts should avoid. A year ago, I published an article about motivated reasoning and how that can damage the data analytics process.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed